VMware Private AI Foundation with NVIDIA – a Technical Overview

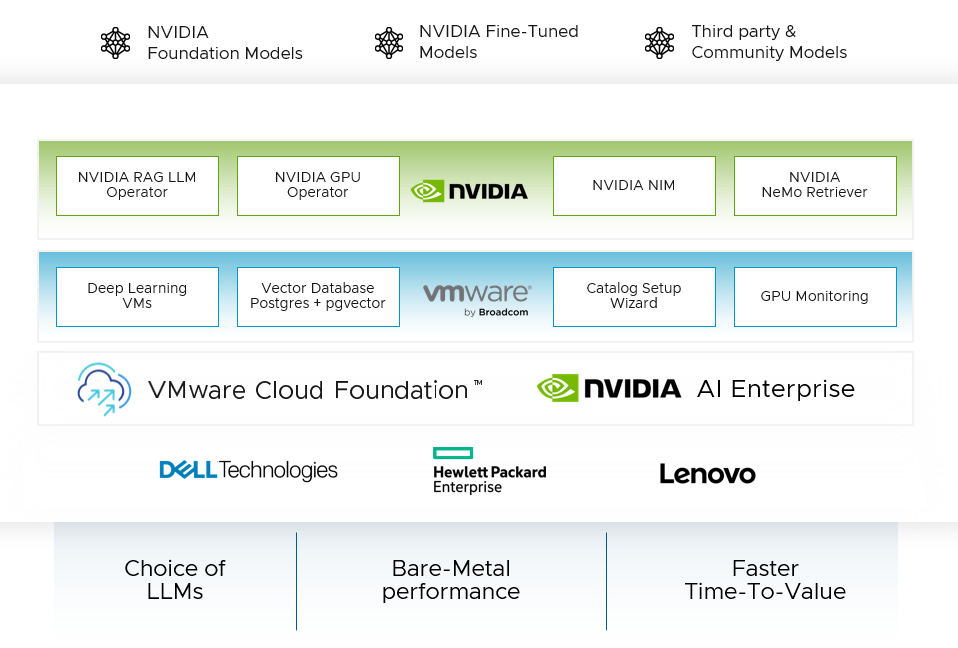

In this article, we explore the new features in the VMware Private AI Foundation with NVIDIA. This is an add-on product to the VMware Cloud Foundation (VCF). The set of AI tools and platforms within that VMware Private AI Foundation with NVIDIA platform are developed as part of an engineering collaboration between VMware and NVIDIA.

We describe the technical values that this new platform brings to data scientists and to the DevOps people who serve them, with the following outline:-

- Providing an improved experience for the data scientist in provisioning and managing their AI platforms using VCF tools

- Deep learning VM images as a building block for these data science environments

- Using a Retrieval Augmented Generation (

RAG) approach with NVIDIA’s Large Language Model (LLM) microservices - Monitoring and your GPU consumption and availability from within the VCF platform

We first look at the outline architecture for VMware Private AI Foundation with NVIDIA to see how the different layers are positioned within it with respect to each other. We will dig into many of these areas in this article and how they are used for implementing applications on VMware Private AI Foundation with NVIDIA.

-

Improved Experience for the Data Scientist

Our objective in this section is to quickly deploy and optimize the development environment that data scientists want in a self-service way. We do this by automating many pieces of the infrastructure needed to support the models, libraries, tools, and UIs that data scientists like to use. This is done in a VCF tool called Aria Automation. What a data scientist sees, once some background work is done by the administrator or platform engineer, is a user interface with a set of tiles they can use to request a new environment. An example of this user interface is shown below:

VMware Aria Automation is a key tool within the VMware Cloud Foundation suite. It provides a Service Broker function with customizable user “quickstart” features that show up as tiles or catalog items in the user interface. Examples of those quickstart items appear in the “AI Kubernetes Cluster” and “AI Workstation” examples above. Aria Automation allows you to custom build your own quickstart templates to suit your own data scientists’ needs. There are likely to be a number of these custom deployment templates available in your organization. There are tools for creating these in a graphical way within the Aria Automation environment. We explore one of the built-in catalog items here, for creating an AI Workstation for a data scientist.

A data scientist user would need some basic information about their desired GPU requirement (e.g. one, two or more full GPUs or a

Here the user first decides on a name for their new deployment. This name will identify a namespace within vSphere into which one or more of the resulting VMs will be placed. An important decision that is made here is the “VM Class”. There are a number of these to choose from – all are set up in advance by a systems administrator/DevOps person. The choice of VM Class here determines the amount of GPU power and the number of GPUs that will be assigned to this deployment (at the VM level). This is a similar concept to the vGPU Profile that is commonly used in setting up NVIDIA’s vGPU software on VMware vSphere Client, but here we are referring to a class of VMs, not an individual one. The user may also choose to use higher values for the CPUs and Memory that are allocated to their deployment VM, or VMs - provided a suitable VM Class is available for that purpose.

Once that GPU-related decision is taken, we move on to deciding on the AI tooling and containers that will be present in the deployed VM that Aria Automation creates. In the

An important example is the Generative AI Workflow for a RAG application example seen below. This will give us a pre-built application that is capable of being used as a template or prototype for a RAG-based solution, using multiple NVIDIA microservices, such as the NVIDIA NeMo Retriever and the NVIDIA Inference Microservice (NIM) from the NVIDIA AI Enterprise 5.0 Suite. We see below the pre-built RAG Workflow software bundle being chosen. The set of microservices/containers supporting this workflow can be loaded into a

At this point, we are ready to submit our request for the deployment of the AI Workstation. From this point onwards, the data scientist does not need to interact with the system. Meanwhile, one or more VMs are deployed automatically by the Aria Automation tool, with all the necessary data science microservices and tools loaded into them. One can monitor the progress of this automated deployment using the Service Broker tool as seen here.

Once the deployment is done, we see the summary page below, indicating the deployed VMs are now running and they have all the tooling from the source repositories that were chosen. All necessary tools and platforms will be fully downloaded and ready to go. At this point, the data scientist user would simply click on the “Applications” link and be taken straight into their environment. In this case, we go straight to the end user application. In other cases, the data scientist may enter a Jupyter Notebook to do further development.

2. Deep Learning VMs - the Building Blocks for Automation

In a basic AI Workstation deployment, a VM running within a namespace is created that has NVIDIA's microservices and tooling deployed in it. This is seen by the systems administrator or D

3. An Example Retrieval Augmented Generation Application with a Chatbot Interface

Our deployment contains an example of a chatbot application that uses the RAG architecture. Below we see the application’s user interface. The application handles questions from the end user. To give answers to the user's questions, the RAG design makes use of the LLaMa-2 model, deployed on the NVIDIA Inference Microservice (NIM), along with a vector database (implemented using pgVector in this case). The user-visible radio button in the UI named “Use Knowledge Base” that is shown as activated here, instructs the application to use the vector database as a source of indexed documents and embedding information to answer the user’s question. That question may not have a correct answer in the LLaMa 2 model’s training data, so it cannot answer questions that refer to “private” data. Here we use “what is llamaindex?” as a sample question to our chatbot application. This is a proxy for questions about private enterprise data, that is not known to our model - and so the vector database augments the model with newer, private data than it has seen before.

In fact, LlamaIndex is a modern development that is used behind the scenes by the RAG application itself for access to data sources. You will notice that the application, when using RAG, answers the question correctly. Without RAG in the picture, the model answers that it cannot answer the Llamaindex question (because it was not trained on data about that subject).

If the data scientist is curious about the microservices (containers) that are deployed to support this chatbot application, then they can see these by logging into their new deep learning VM. This can be done from the deployment page in Aria Automation by using the “Services” -> SSH with the address of the new VM.

On logging in to their VM, with their own password that was chosen earlier at request submission time, the data scientist can see their microservices names with their status.

That Retriever Embedding model, running as a microservice, also creates word embeddings for queries executed on the vector database to allow it to answer user questions. The pgVector microservice seen at #5 above uses a Postgres database instance running within the enterprise separately to these microservices We provision such PostGres databases using a VCF tool called Data Services Manager, that presents a UI for provisioning several different databases.

If the user required this kind of application to be deployed on Kubernetes, then the NVIDIA AI Enterprise 5.0 suite of microservices includes a “RAG LLM Operator” that can be used with Helm for that purpose – in a separate deployment. There are many different RAG-based application examples seen in the appropriate NVIDIA NGC repository.

Private Containers/Microservices

Depending on the privacy level that is required by the enterprise,

4. Monitoring GPU Consumption and Availability

Within the VMware Cloud Foundation portfolio, there are configurable views you can use or build of your GPU estate, embodied in tools such as Aria Operations seen below. We can choose a particular cluster of host servers and observe the GPU consumption for that cluster only. A cluster may be dedicated to one group of users within a certain Workload Domain in VMware Cloud Foundation, for example. We can drill down here to individual hosts within the cluster and to individual GPU devices within a host server. The second from left navigation pane here shows the cluster members, i.e. the set of physical host servers, and the pane to the right of that shows the physical GPUs on the selected host, identified by the PCIe bus ID here. We have full customization power over the metrics shown in the graphs within the rightmost panel here. We can compare these different metrics using the Aria Operations tool.

Lastly, if we want to see the overall cluster’s performance as a function of the GPU power used on that cluster of hosts, we can use the GPU metrics seen here to get a view on how the whole cluster is behaving, not just with respect to its total GPU consumption, but all other factors as well.

These views of GPU usage and availability are very important to the systems administration team who want to see what remaining capacity they have in the GPUs on all clusters. This is key to doing accurate capacity planning for new applications they want to deploy. Future developments in this area are being investigated to allow further drill down to the VM and vGPU levels.

Conclusion

In this article, we explored the VMware Private AI Foundation with NVIDIA technology, to understand the ease of use the system provides to the data scientist at provisioning time and further at ongoing management time.

We used the Aria Automation capability for customizing AI deployment types and for removing the infrastructure details from the data scientist’s concerns, allowing them to be more productive in their modeling and inference work. We used a set of Deep Learning VM images as a basis for this provisioning step, where these images can be downloaded from VMware or indeed custom-built to serve the needs of a particular enterprise. A sample RAG application was deployed on a single VM as a proof-of-concept. That application makes use of the NVIDIA Inference Microservice (NIM) and NeMo Retriever Embedding Microservice, along with the pgVector database technology to support the end-use application, the LLM UI. We used the Harbor container repository to maintain a private collection of tested microservice containers for ease of download and for privacy. We explored the monitoring and management capabilities of the Aria Operations tool within the VMware Cloud Foundation to determine the current and past state of our GPU consumption, to do capacity planning and optimization of the GPU estate. This comprehensive set of technologies shows the commitment of the VMware by Broadcom and NVIDIA organizations to making you successful in your work on Large Language Models and Generative AI to optimize your business.

To register your interest for access to the VMware Private AI with NVIDIA, complete this form Note that submitting this form does not guarantee access to the software. Availability is subject to approval and may be limited.

Further Reading

VMware Private AI Foundation with NVIDIA - Solution Brief

VMware Private AI Foundation with NVIDIA Guide

Announcing Initial Availability of VMware Private Foundation AI with NVIDIA

VMware Private AI Foundation with NVIDIA - Server Guidance

Aria Automation March 2024 (8.16.2) - Private AI Automation Services for NVIDIA

Automation Services for VMware Private AI

Retrieval-Augmented Generation - Basics for the Data Center Admin

Data Modernization with VMware Data Services Manager