Using vSAN as a Management Cluster

Introduction

The introduction of VMware vSphere into data centers everywhere also popularized the deployment practice of management clusters. Now a staple of data centers large and small, most administrators have experience with running some form of a management cluster. While management clusters come in all shapes and sizes, the intention is largely the same: Establish a boundary of resources to run an assortment of systems supporting the infrastructure to aid in the operation of a data center. Common examples include but are not limited to directory services, host name resolution through DNS, IP address management through DHCP, time synchronization through NTP, and other services that provide critical data logging and analytics.

But the inevitable question arises. Can vSAN be used to power a management cluster? Should it be used to power a management cluster? The short answer is “Yes!” Let's go into why this approach makes sense.

The Challenge of Management Clusters Using Three-Tier Architectures

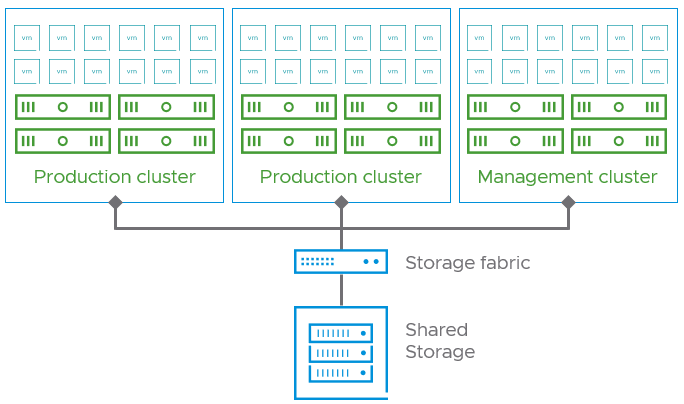

Consider an environment powered by a traditional three-tier architecture, where you have vSphere clusters using a dedicated storage fabric connected to a storage array providing shared storage to potentially dozens and dozens of hosts, as shown in Figure 1.

Figure 1. Traditional three-tier architecture with management cluster.

With three-tier architectures, management clusters are often the result of little more than the act of carving out a simple cluster in vSphere and moving the appropriate VMs supporting the infrastructure to the new cluster. While this does help isolate some resources such as compute and memory, it does not meet the desired outcome of true isolation. The following are examples of what can be found in suboptimal management cluster implementations.

- A management cluster using the same storage array(s) that the production clusters are using. This can pose challenges such as circular dependencies in maintenance scenarios of the array or the backing fabric used for connectivity. If the storage array is offline permanently or temporarily, the ability to provide those infrastructure services such as DNS may also be compromised. Arrays that serve multiple clusters can also be subject to noisy neighbor issues, where VMs from one cluster are unduly impacting the performance of VMs in another cluster.

- Partial or full use of the same network connectivity as production clusters. This can occur quite often with clusters that do not have sufficient network topologies that support some level of isolation with other network switching.

- A storage array no longer under manufacturer maintenance is repurposed for use with the management cluster. This starts with good intentions, as the administrator may have recognized the need for independent storage. It promotes a solution using unsupported hardware that would have otherwise been decommissioned. This is a practice that gives the illusion that it is a cost-effective option, but introduces risk, and complexity, and just isn’t an effective long-term strategy.

- Direct attached, local storage. This attempts to work around the need for shared storage but compromises the availability of infrastructure-related VMs. What starts as a simple idea ends up being an administrative burden that puts the availability of services at risk.

These examples demonstrate the challenges of using a traditional architecture with a management cluster.

Management Clusters Powered by vSAN

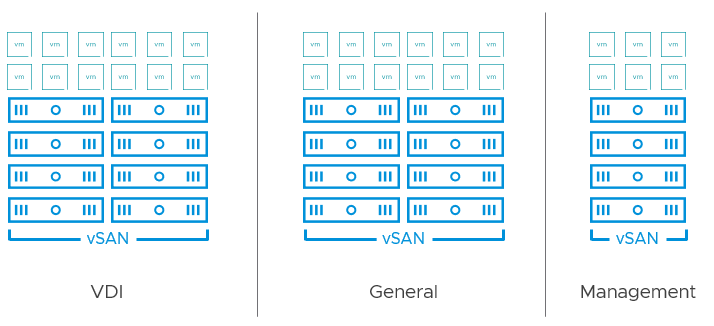

vSAN helps address the challenges of designing a fully independent management cluster. Unlike three-tier architectures, vSAN treats storage as an exclusive resource of the cluster in its default, non-disaggregated topology. Beyond the granular capabilities that storage policy-based management gives to VMs running within the cluster, this model isolates storage traffic to VMs living within that cluster, as shown in Figure 1. This is what greatly simplifies dependency trees during planned and unplanned maintenance events. Lines of demarcation are very clear with vSAN.

Figure 2. Storage I/O is properly isolated when using a vSAN-powered management cluster.

vSAN addresses all the challenges outlined above in an easy and flexible way. Rather than use hardware resources that were provisioned for resource-intensive, production workloads, one can tailor the build of a vSAN management cluster that is most fitting for these infrastructure-level services. For example, maybe many of your vSAN clusters comprise of dual processor hosts with high-performance storage devices, large amounts of RAM, and other customizations. In many cases, the management VMs serving the infrastructure have modest requirements and can run on vSAN ReadyNodes with fewer resources and value-based componentry.

Recommendation: If possible, use the vSAN Express Storage Architecture (ESA). The ESA allows for all new levels of efficiency with lower TCO that apply to management clusters as well as production clusters. Given the introduction of a new entry-level ESA-AF-0 ReadyNode profile, and the support of new low-cost Read-Intensive devices in these ReadyNodes, the ESA is ideal for a small, low-cost management cluster.

Management clusters running vSAN can easily adapt to change. Some smaller environments choose to run their log aggregators and infrastructure analytics applications in their management cluster. Resilience objectives can easily be controlled per VM or VMDK using storage policies. As the environment grows, resources can be added to that same cluster by scaling up or scaling out. Eventually, those specific workloads may be shifted to another cluster if the desire is to keep the management cluster relatively small.

Recommendation: Configure a management cluster powered by vSAN ESA with at least 4 hosts. Using the ESA, one will be able to store data resiliently using space-efficient RAID-5 erasure coding while still having an additional host to accommodate for a sustained host failure, where the data can easily and automatically regain its prescribed level of resilience in those situations.

Since vSAN communicates over traditional IP networking, many environments have more flexibility when it comes to providing a switch topology that is independent of switchgear serving other clusters, versus their dedicated storage fabrics. This allows cluster design to address a desired result for the applications, rather than comply with a current constraint of hardware, or a topology.

Recommendation: Consider independent networking and power supplies for your management cluster. This prevents reliance on the networking fabric and power distribution providing resources to the production environment.

vCenter Server on vSAN

What about running vCenter Server on vSAN you might ask. Isn’t there a dependency? While vCenter Server is the most common way to interact with a vSAN cluster, much of the vSAN control plane resides across the hosts that make up the cluster. The data path, and all low-level activities such as object management, health, and performance monitoring are all independent of vCenter Server.

If the management cluster needs to be powered down, this can be easily shut down now in the vCenter Server User Interface using the vSAN Cluster Shutdown workflow. It is also available programmatically when using PowerCLI 13.1 with vSAN 8 U1. vSAN also can accommodate a vCenter Server instance that has been restored from a backup, or a pristine vCenter Server that has been rebuilt to replace an unavailable vCenter Server. See the topic "Replacing vCenter Server on Existing vCenter Server" in the vSAN Operations Guide for more information.

Summary

Using vSAN to power your management cluster makes sense. It provides an easy way to run systems that support the infrastructure in an independent manner, without compromising availability and operational objectives.