What’s New with vSphere 8 Core Storage Announcements

There have been some exciting announcements with vSphere 8. With Core Storage, there have also been exciting features, and enhancements added. Below is a summary of the features, and at the end of the blog is a link to the deeper technical article.

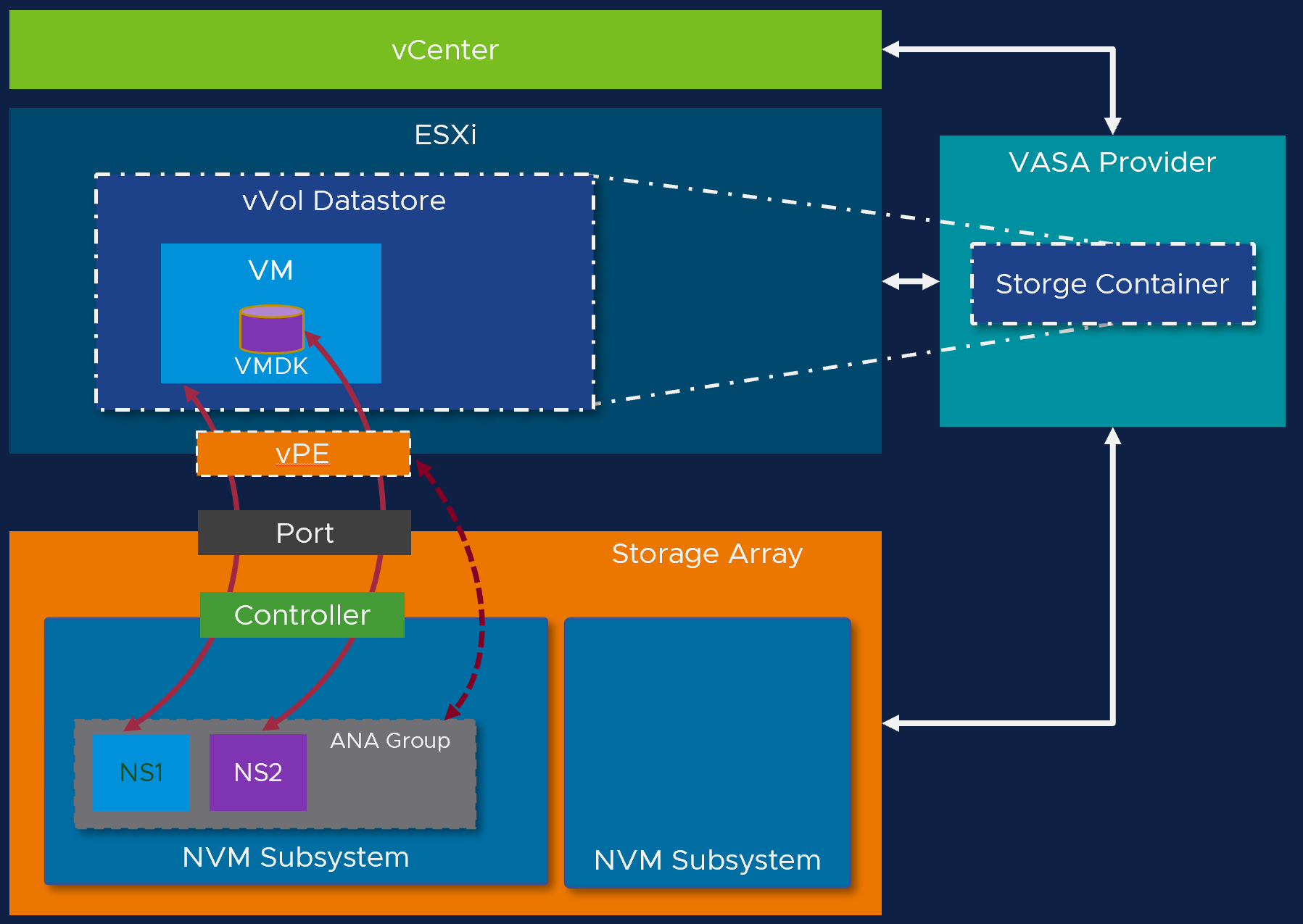

NVMeoF vVols

The biggest announcement is the support for vVols with NVMeoF. NVMeoF continues to grow in popularity in the industry for obvious reasons. With NVMeoF you get better performance and lower latency over typical SCSI. NVMe was designed for flash, and connecting to an all-flash NVMe array using SCSI is an inherent bottleneck. With NVMeoF vVols, each vVol object becomes and NVMe Namespace. These Namespaces are then grouped into an ANA group. With NVMe many of the commands are In-Band for more efficient communication between vSphere hosts and the array.

VMware wants to make sure vVols stays current with the latest storage technologies and shows that vVols are VMware’s primary external storage focus.

vVols Enhancements

With vVols being the primary focus for engineering, we have made some improvements in performance on vVols as well.

- VM swap improvement. Improve during power on or off and vMotion performance.

- Keep config-vvol bound. Avoid additional latency from VM home queries from vSphere systems.

NVMeoF Enhancements

VMware has also made some improvements and added features to NVMeoF.

- We have increased the Namespace and path limits up to 256 NS and 2k paths, further increasing the scale capabilities.

- Extend reservation support for NVMe device. This allows customers to use the Clustered VMDK feature for clustered applications such as Microsoft WSFC on an NVMeoF Datastore.

- Advanced NVMe-oF Discovery Service support in ESXi. This simplifies the discovery process when using NVMe-TCP when discovering NVMe controllers in the environment.

Space Reclamation

In vSphere 6.7, we added automatic space reclamation on supported Datastores. In some cases, in large environments with numerous Datastores and hundreds or even thousands of VMs, customers have reported impact to the array with massive reclamations. An example might be in a large VDI environment, where you have a power-off storm, everyone leaves, and thousands of VMs are powered off simultaneously. This results in thousands of swap disks being deleted altogether and, with the default 25MB/s, could potentially overwhelm an array trying to reclaim such a large amount of space.

Subsequently, we have added the capability to reduce the reclamation rate to 10MB/s, reducing the impact.

VMFS and vSANDirect Disk Provisioning Storage Policy.

This allows DevOps admins to choose the disk provision type via Storage Policy Based Management. You can now use an SPBM policy paired with a Storage Class and define EZT, LZT, or thin provisioning.

NFS Enhancements

We have continued to enhance traditional storage types, including NFS. With vSphere 8, we have increased NFS resilience with:

- Retry NFS mounts on failure

- NFS mount validation

For more detail on each feature, go to the technical article on core.vmware.com.

What’s New with vSphere 8 Core Storage

VMware storage engineering has been working hard on vVols and NVMeoF, as well as VMFS and NFS features. More exciting features are planned in the near future, and I can’t wait to share them with the community!